Craig Charley

16 May 2014

10 Tips for Hacking Semantic Search

Is it possible to increase search visibility without building links?

At BrightonSEO in April Semantic Search was a popular topic that featured in many talks and I also had the pleasure of taking part in a Semantic Search Roundtable discussion, sponsored by Intelligent Positioning.

My inspiration for this post was Andrew Isidoro's post 'I Am an Entity: Hacking the Knowledge Graph' and companion talk at BrightonSEO. Read his post and then watch the talk below:

Andrew Isidoro at BrightonSEO April 2014

It is also worth reading Krystian Szastok's follow up post 'I Became an Entity: How I'm on the Knowledge Graph' which gives further evidence of becoming an entity with some additional tips.

Andrew and Krystian's posts are both excellent reads, but they focus on individuals and the knowledge graph.

I also want to focus on businesses.

How can businesses get themselves into the knowledge graph, and also take advantage of the other features of semantic search?

First, a bit of theory about why this is so important.

Semantic Search Theory

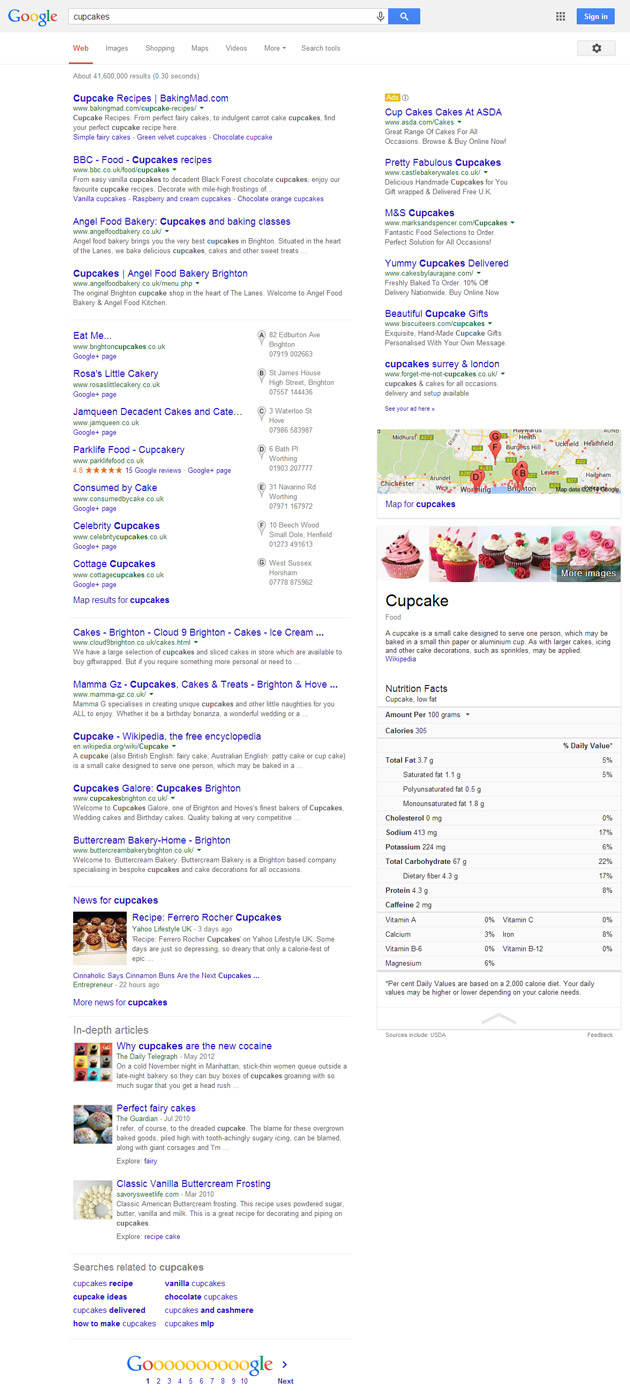

The following graphic featured in at least three separate talks at BrightonSEO:

It demonstrates the features added to SERPs in Google's first 15 years. It was published as part of an Inside Search blog post called 'Fifteen years on - and we're just getting started'.

As the title indicates, Google's search engine is always changing. The search quality team is looking for new ways to provide useful answers for searchers.

Anchor text links still dominate search algorithms, but search engineers are doing everything they can to move away from this and look at other signals instead; to try and undo years of linkspam.

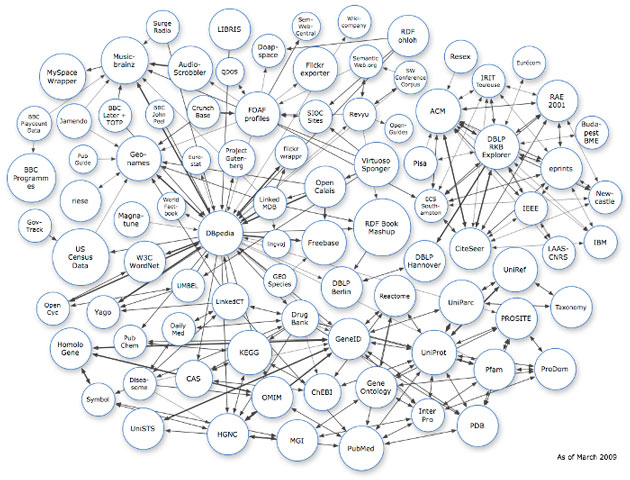

Google wants to understand searcher intent; it wants to understand websites and pages, people and businesses. It wants to understand things. And it wants to understand the relationships between things.

This is called the semantic web and it is the difference between strings and things.

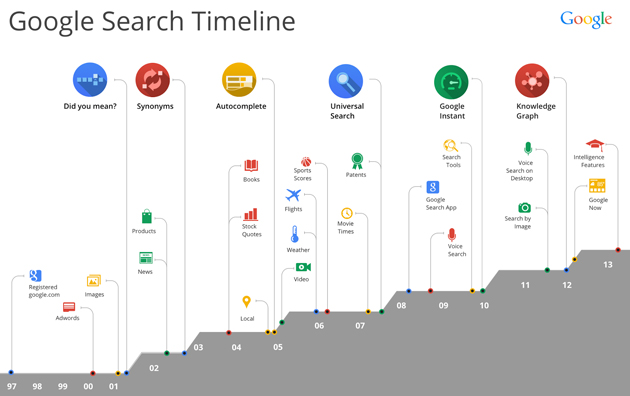

We saw knowledge graph explode in July last year:

And then Google took a big step forward with Hummingbird, a major rewrite of the search engine, which gives Google the ability to 'understand'.

I particularly like Gianluca Fiorelli's summary of the potential benefits to Google:

"Google now is (in theory) able:

- To better understand the intent of a query;

- To broaden the pool of web documents that may answer that query;

- To simplify how it delivers information, because if query A, query B, and query C substantively mean the same thing, Google doesn't need to propose three different SERPs, but just one;

- To offer a better search experience, because expanding the query and better understanding the relationships between search entities (also based on direct/indirect personalization elements), Google can now offer results that have a higher probability of satisfying the needs of the user.

- As a consequence, Google may present better SERPs also in terms of better ads, because in 99% of the cases, verbose queries were not presenting ads in their SERPs before Hummingbird."

I recommend reading Gianluca's full write up on the Moz blog, 'Hummingbird Unleashed'.

As Andrew Isidoro points out, Google isn't getting this right all the time (he references the Knowledge Graph entry that claims Nietzsche was a film star), and so it's up to us to help make their information more accurate.

Semantic Search in Action

The theory of semantic search and what it could mean is interesting, but is it actually happening?

Jon Earnshaw gave two examples of semantic search in action in his talk 'Optimising and Monitoring Online Ecosystems in the Age of Semantic Search'. The first was a SERP for [cosmetic dentistry] which demonstrated the variety of different searches being catered for in one set of results. The other was a demonstration of conversational search.

I'm going to use my own examples in this post, but make sure you watch Jon's full talk to also find out some potential negative effects of semantic search:

Jon Earnshaw at BrightonSEO April 2014

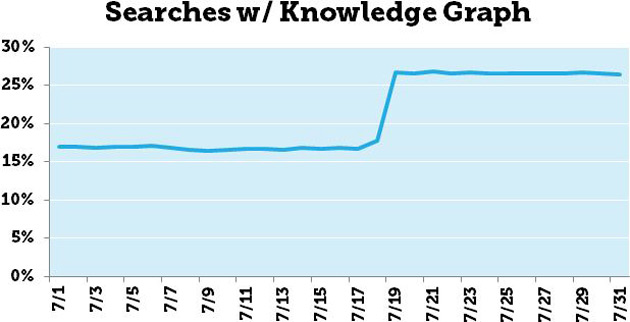

Here is a SERP for [cupcakes], incognito search, with location set to Brighton, UK (based on IP):

This is one of the biggest SERPs I've ever seen! There are so many different types of result here, catering for every search possible. In order of first appearance they are:

- Cupcake recipes (organic listings)

- Brighton cupcake bakeries (organic listings)

- National retail cupcake bakeries (paid listings)

- Sussex cupcake bakeries (local listings)

- Map showing cupcake bakeries in Sussex

- Images of cupcakes

- Knowledge Graph entry for 'Cupcake'

- Wikipedia description

- Nutritional facts (source: USDA)

- Cupcake news articles (!?)

- In-depth articles about cupcakes

- Searches related to cupcakes

From the top of my head, the only possible results missing are videos and Product Listing Ads.

I have searched for a single word "cupcake", but Google has answered the following queries:

- [Cupcake recipes]

- [Cupcake bakeries near me]

- [Cupcakes order online]

- [What is a cupcake?]

- [How many calories are there in a cupcake?]

- [What's the latest on cupcakes?]

- [Pictures of cupcakes]

And many, many more...

If I was logged into Google, then the results would probably be more tailored based on past searching habits. If I regularly searched for baking recipes then there might be more cupcake recipes for example.

The popularity of different searcher intents also will have an effect on how many of each type of result is shown.

I would guess that if this was 5 years ago then there would be more than 2 recipe results, but in the past few of years cupcakes have become a phenomenon and bakeries have sprung up left, right and centre. As more people search for (and click on) locations of cupcake bakeries instead of recipes, Google tweaks the results to answer the most popular intent.

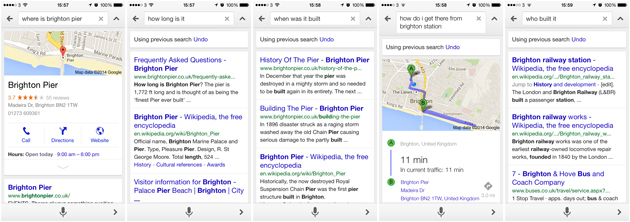

My second example of semantic search in action (again thanks to Jon Earnshaw for the tip) is in voice search.

Check out my conversation with Google below, and then try it out yourself.

After asking a question about the pier Google knows that each following question is about the pier, until I ask about another entity 'Brighton Station' which it then assumed is the subject of the conversation.

Both of these demonstrations show an opportunity to increase your search visibility dramatically by think about searchers instead of keywords.

More evidence that Google is moderating queries came from Rob Bucci's study of local SERPs.

So with the theory out of the way, let's get into some practical advice.

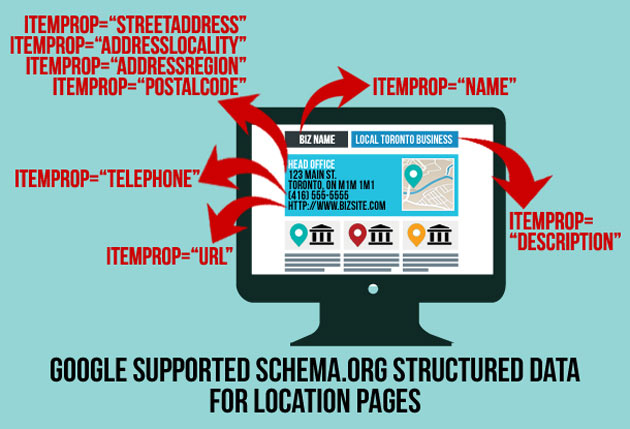

Tip #1: Markup Everything

Search engines are getting better at understanding language and entities, but they need a bit of help. This is where Structured Data Markup comes in.

Our preferred markup language is schema.org because it is supported by all the major search engines.

Marking up your site is our number one tip for increasing your search visibility with semantics.

By adding code to your pages, you are giving search engines the key to understanding what the content on a page.

You can define people, places, events, actions and a lot, lot more.

To find out how to implement structured data markup on your own site, read our comprehensive guide.

Currently, we're only seeing clear signals that structured data markup affects rich snippets and also knowledge graph entries (by confirming other data sources).

However, we're starting to see some evidence that structured data markup is helping pages rank for competitive keywords that don't appear on-page.

As schema.org develops and is adopted by more sites (especially larger brands which aren't using it yet) I think that we will see it have a bigger impact on SERPs.

Each course page on our site is marked up as an education event, so straight away search engines know that each page is referring to a course.

We then give more information about the education event - name, location, date, price, reviews etc.

So instead of stuffing our course pages full of keywords that we are targeting, we use structured data markup to explain what the page is about using code.

This allows us to think about the user when creating content, instead of optimal keyword density (ugh).

It also means that we don't have to create five pages for five keyword phrases, just one with the correct markup.

For me, this is the most exciting part of the semantic web.

Unfortunately, it's also the most spammable so it will be interesting to see how it is policed.

Tip #2: Searcher Intent =/= Keywords

Google knows that [cupcake] is a food, just as it knows that [brighton pier] and [brighton station] are physical places. This knowledge allows the search engine to make assumptions about searcher intent that it couldn't make when only considering strings.

When creating content, ask "What queries am I trying to answer?" not "What keywords do I want to rank for?"

Google is no longer presenting results based on explicit queries; it is making assumptions based on user behaviour and huge amounts of data to create a new query before delivering results.

This should, in theory, improve the user experience by producing more relevant results and filtering out the results that the searcher doesn't want to see.

If you are aggressively building links to your recipe site targeting the keyword [cupcakes] but Google decides that 80% of people searching [cupcakes] actually mean [where can I buy cupcakes near my location?] then you're missing the traffic.

Tip #3: Watch the SERPs

Automation is fantastic, and in many cases the only way to scale your efforts. But it's important to actually look at the SERPs and see what is going on.

The graph above, again from Jon Earnshaw's BrightonSEO talk, shows how important it is to actively monitor the SERPs.

At a glance, it would look like Virgin are ranking on page 1 throughout the entire period for [broadband deals], and that is what many tools would report. However, Google is fluctuating between three different landing pages.

It might be that one of those pages (probably the main page in pink) is the one that Virgin wants to rank and have spent the most time and money on optimising for conversions. While it's not ranking, they're losing money.

Jon also demonstrates how these conflicting pages can also bring down your visibility (see slides 17, 21, 23).

Monitor the SERPs to make sure you're not shooting yourself in the foot.

Tip #4: Diversify your Content

Remember how there were 12 different types of results for the [cupcakes] search? What if you were able to appear on page 1 of Google 12 times?

Instead of trying to get one piece of content to rank 1st, try diversifying your content and dominate the page. Answer every query the searcher might have.

How could you dominate the [cupcakes] SERP?

If you were a cupcake bakery in Brighton you could create the following content, with the potential to rank all of it on page 1:

- Localised page about your brick and mortar store

- Images of your cupcakes

- Video of your baking process

- Cupcake recipes

- Retail page for ordering cupcakes online

- Nutritional information about your cupcakes

- Cupcake news

- Blog posts about cupcakes

- Local Google+ page for your store

This gives you an advantage because you're giving users a huge number of ways into your site, and so into your brand.

In effect, you're expanding your marketing funnel and pulling in potential leads at all stages of the buying cycle, all from one SERP.

Tip #5: Develop Inhouse Experts

Authorship is probably the most discussed element of semantic search to date. Not everyone has a Wikipedia page, so Google introduced rel=author.

Combined with Google+, rel=author gives Google the building blocks to start understanding people who create content on the web.

Using data from my social profiles and the articles I have written, Google can build a clear picture of "Craig Charley" the digital marketer. It can then use signals to determine how authoritative I am in certain areas and increase my visibility accordingly.

This isn't necessarily in effect right now but I believe that is to do with low take-up. The more people use rel=author, the more Google will trust it as a signal.

The main issue for brands is that most of their content is created by marketers, who are not always experts in the subjects they write about.

Businesses need to start training their experts (the ones making the products, running the services etc.) to write content and engage in communities.

Build up your best staff as entities tied to your brand.

If your strategy is to answer search queries, then who is better to do so than the experts in your business?

Subject matter specialists are also far more effective in engaging with communities - marketers can be spotted a mile away by savvy forum users.

Also remember to use rel=publisher to tie the content back to your brand.

Tip #6: Don't be Afraid to Link Out

Just this minute I received an email from Search Engine Journal with their updated guidelines for authors.

Item number 1: All articles must now:

"Include at least two external citation links per post.

Fundamentally, links are about citations. They tell the reader that the author isn't operating in a vacuum, that they're incorporating 3rd party perspectives and evidence in support of their argument. Otherwise, it's just a theory without substantiation."

Although this is a link-based tip I'm including it as semantic search because it is about linking out, not building links.

Link to sources and further reading in your blog posts and resources, create pages linking to other services in your area or niche (see our top places to eat in the North Laine!)

These links are not just about the link itself but about the topics being discussed so that Google can place you in an ecosystem.

Link to nearby places to boost local signals, link to authoritative sources in your niche to boost your authority and relevance and use links to make your pages the best they can be - even if that means pushing visitors offsite.

Tip #7: Create an Ecosystem

Another hat tip to Jon Earnshaw - think of your web properties as an ecosystem.

Your site(s) should be structured in a way that makes sense to users and robots.

Try not to create conflicting content (especially across domains/subdomains) as this can affect the visibility of all content. Google is better at identifying relationships between websites that many people think.

Create the complete web experience for your users so that they don't need to leave your site (diversified content!) but provide exits where absolutely necessary (link out!).

Aim to become part of a larger ecosystem in your niche by linking out and creating content to attract links from other sites.

Tip #8: Use Google+

I am amazed at how many brands still don't use Google+, or even have a filled out profile.

Not only does Google love injecting G+ results into SERPs, it also uses it to source data.

It is essential to fill in every box of your Google+ business page and make sure that it matches up 100% to the information on your website. The information on your website should also be marked up, so that Google can compare telephone numbers, addresses etc. with ease.

Rel=author and rel=publisher are both validated using Google+, so how likely is it that other information is as well?

Google is screaming for information about your business, give it to them!

Tip #9: Clean Up & Gain Citations

As Google becomes better at understanding language, links become less important.

A patent covered this year by Bill Slawski got a lot of people excited as it uses the phrase "implied links". This has been taken to mean a citation.

Simon Penson discusses the implications for implied links in detail here, but to summarise it means that at some point web mentions could become as important as links. Especially is those citations come from authoritative sources.

So every mention of your brand doesn't necessarily need to have a link, relax!

Citations are also important for validating information about your business, as Andrew aluded to in his talk:

Do some branded searches and clean up your profiles across the web so that the information matches up to your website and G+ page.

Create some cool stuff that will get people talking about your brand, even if they aren't linking to you.

Your final goal will be to build up a strong enough online presence to warrant Wikipedia & Freebase entries. Remember, you can't just add these yourself or they will be taken down.

However, one tip is to build up a good Wikipedia profile by editing a lot of entries. Once you've done a fair amount of entries then you might get away with adding a reference or two to your site (as long as you can link to something unique that offers value to the entry).

Tip #10: Don't Spam

Finally, this tip can be applied to any area of SEO: Please don't spam these tactics!

The link graph is broken because everyone spammed it to death. A lot of methods for link building in a natural way have been ruined by spam including guest blogging and press releases.

Of course, you could markup your site with fake information, pay for mentions, buy Google+ followers and come up with some kind of 'optimal outbound link ratio'.

However, Google is paying attention all the time to spam techniques. When links were first used as a measure of authority, they wouldn't have thought 'this could get spammed easily', but now they will be trying to make sure that any new developments are as spam proof as possible.

Rich snippets and authorship have both been withdrawn as a result of spam.

So play the game and become an entity. Or even better, an ecosystem of entities.

Be the most useful answer.

There are a lot of people saying that if link building is dead then SEO is dead, but all of the tactics in this post are designed to increase search visibility by optimising your site and business.

Further reading:

- Google Launches Knowledge Graph To Provide Answers, Not Just Links - Danny Sullivan

- Google’s Hummingbird Deepens Semantic Search Results - David Amerland

- The Incredible Impact of Knowledge Graph Cards on Google Glass Search Results [Research] - Glenn Gabe

- Knowledge Graphy Optimisation - AJ Kohn

- The Changing Face of Knowledge Graph - Ned Poulter

- Semantic SEO: Making the Shift from Strings to Things - Aaron Bradley